How to Fine-tune Llama 2 with LoRA for Question Answering: A Guide for Practitioners

4.7 (171) · $ 13.00 · In stock

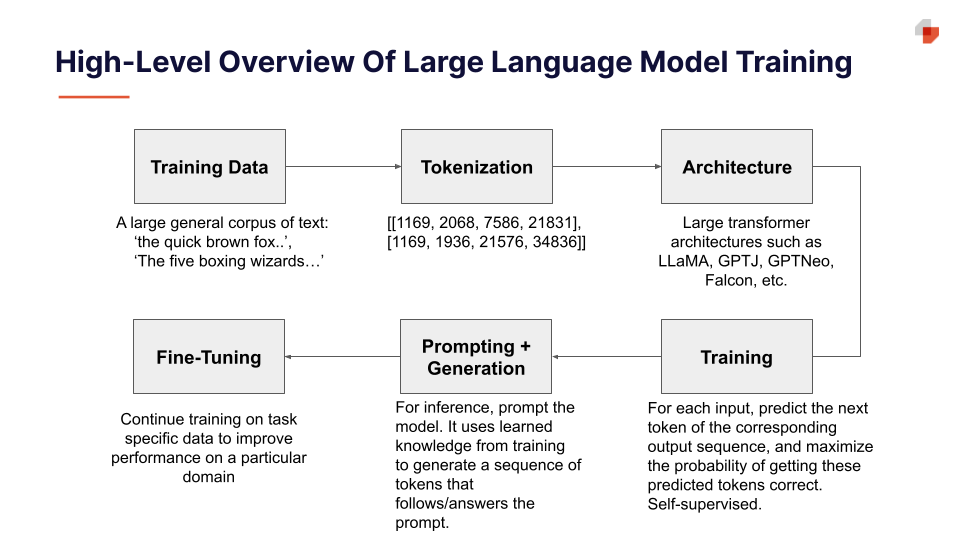

Learn how to fine-tune Llama 2 with LoRA (Low Rank Adaptation) for question answering. This guide will walk you through prerequisites and environment setup, setting up the model and tokenizer, and quantization configuration.

S_04. Challenges and Applications of LLMs - Deep Learning Bible

FINE-TUNING LLAMA 2: DOMAIN ADAPTATION OF A PRE-TRAINED MODEL

arxiv-sanity

Enhancing Large Language Model Performance To Answer Questions and

Leveraging qLoRA for Fine-Tuning of Task-Fine-Tuned Models Without

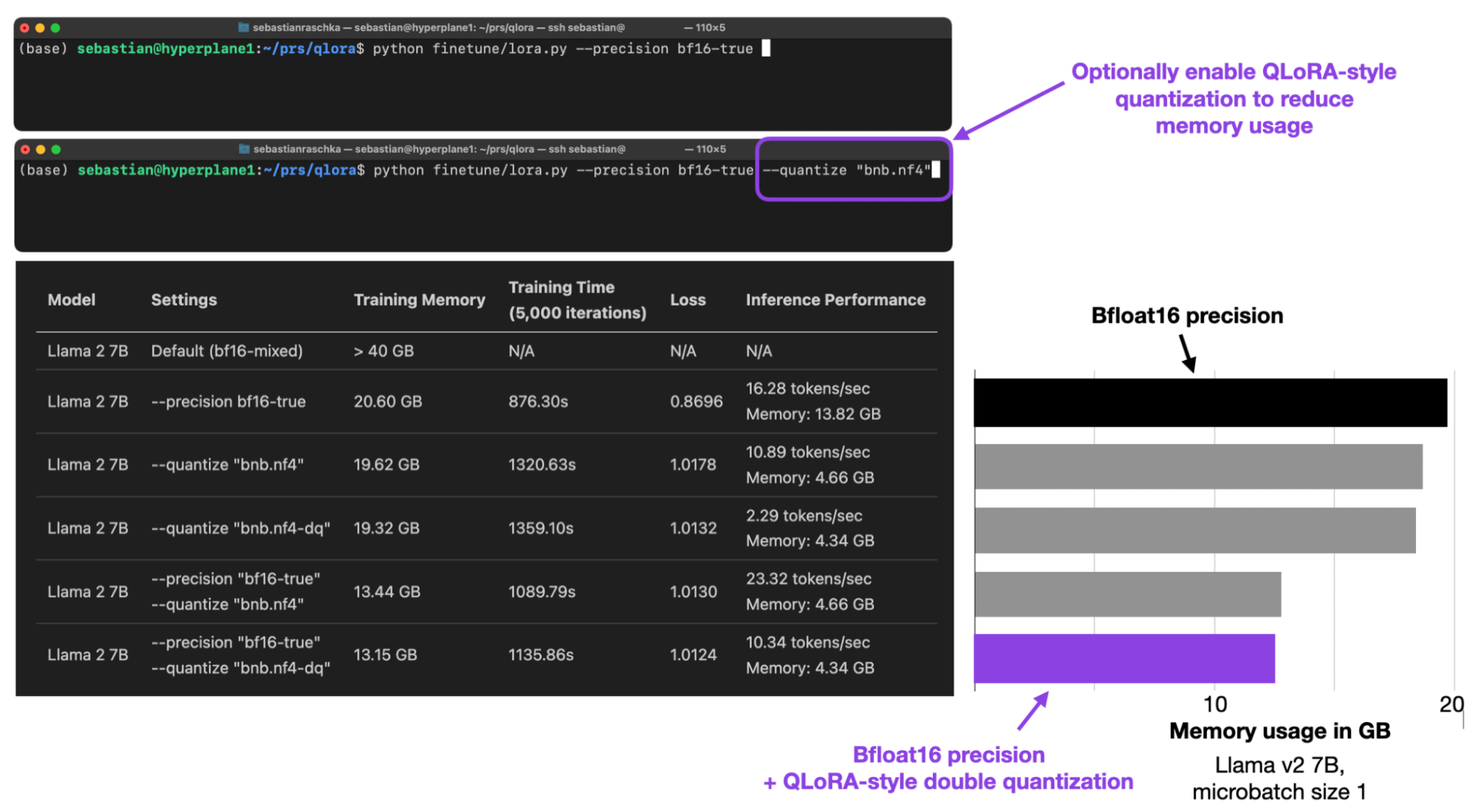

Practical Tips for Finetuning LLMs Using LoRA (Low-Rank Adaptation)

Alham Fikri Aji on LinkedIn: Back to ITB after 10 years! My last visit was as a student participating…

How to Fine-tune Llama 2 with LoRA for Question Answering: A Guide

Fine Tuning Llama 2 using QLoRA and a CoT Dataset

Fine-tuning Large Language Models (LLMs) using PEFT

Sanat Sharma on LinkedIn: Llama 3 Candidate Paper

New LLM Foundation Models - by Sebastian Raschka, PhD

10 Things You Need To Know About LLMs - Predibase - Predibase

Fine-tune Llama 2 for text generation on SageMaker

![What is LLM Fine-Tuning? – Everything You Need to Know [2023 Guide]](https://a.storyblok.com/f/139616/1200x800/b050784413/thumbnail.webp)