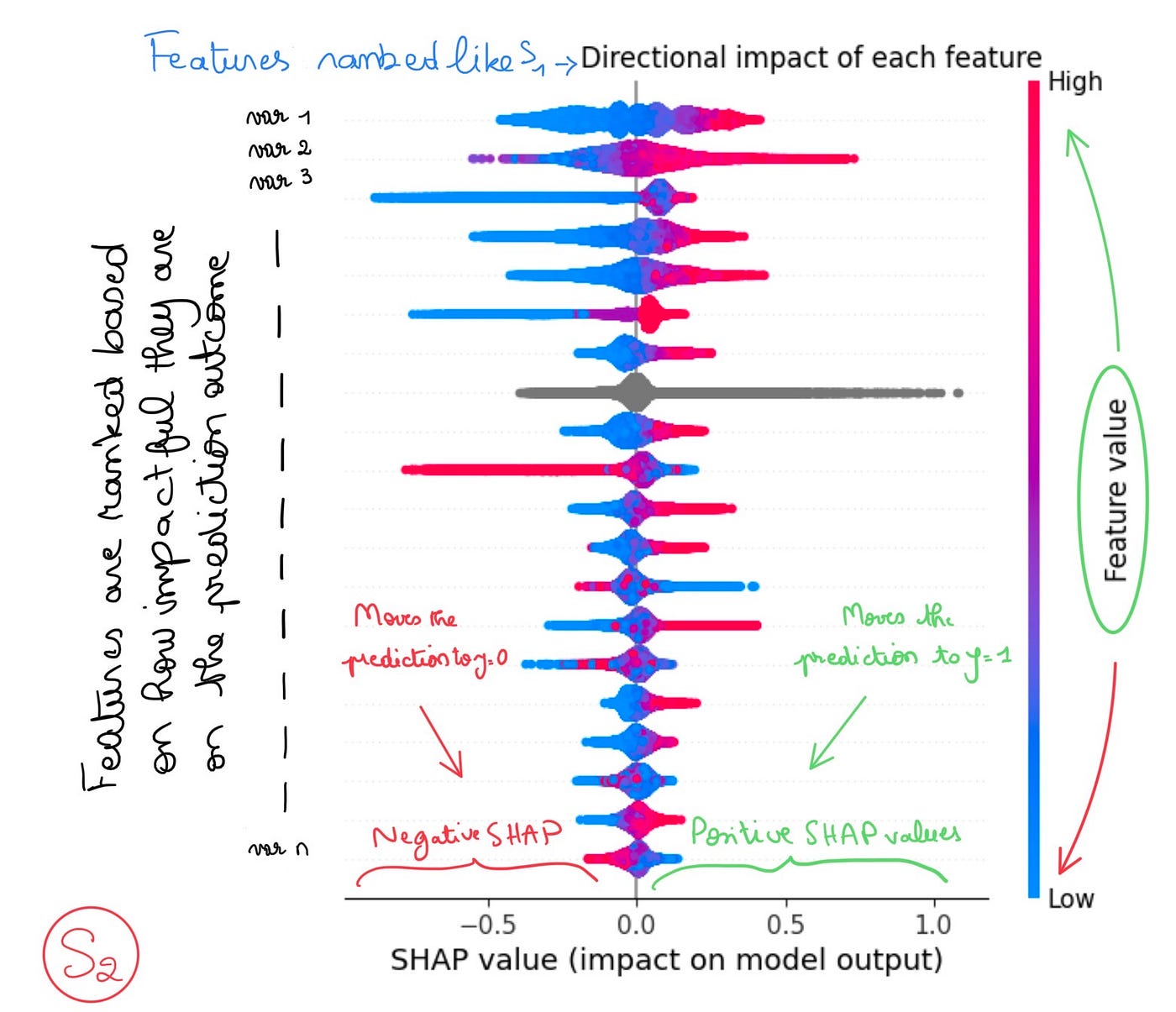

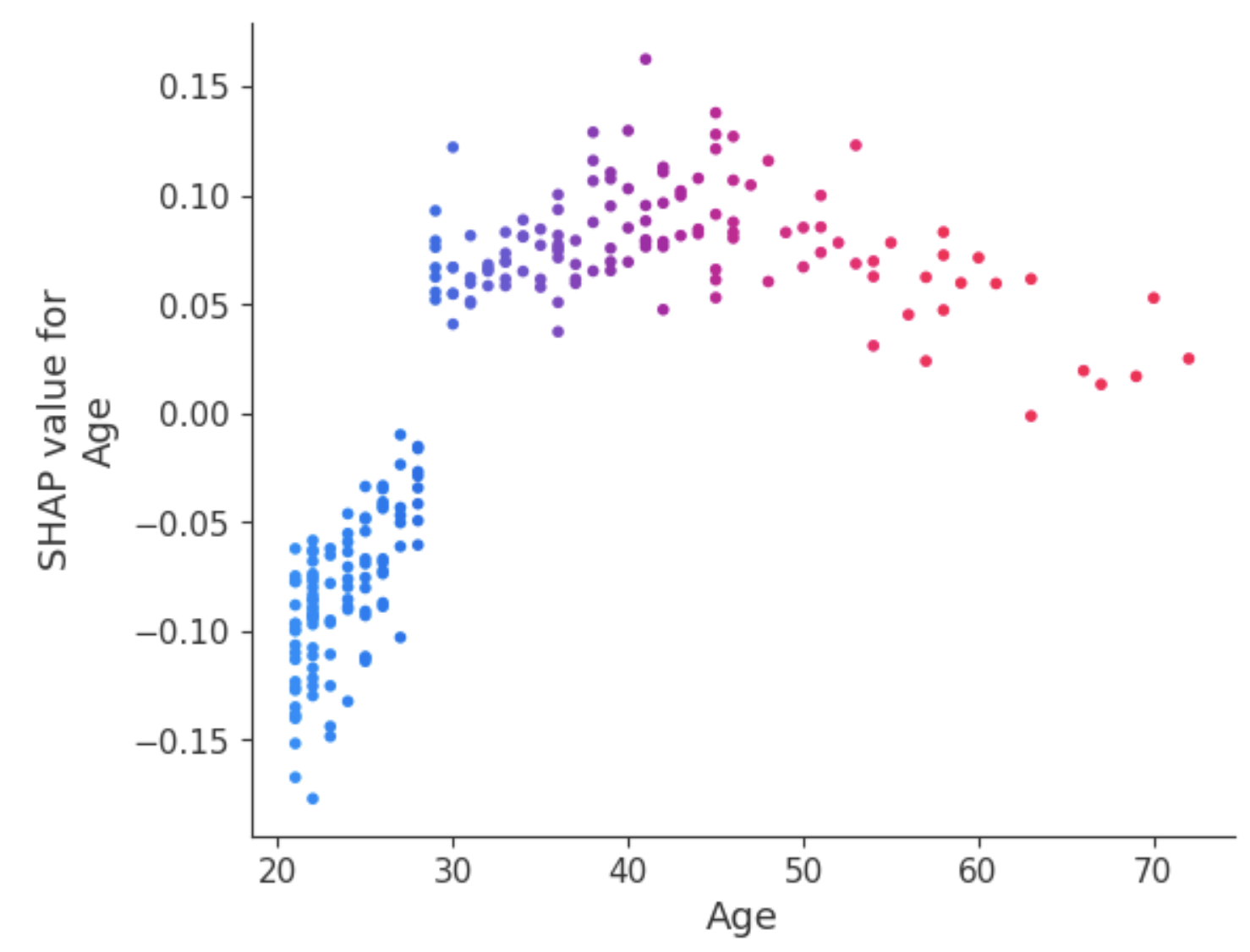

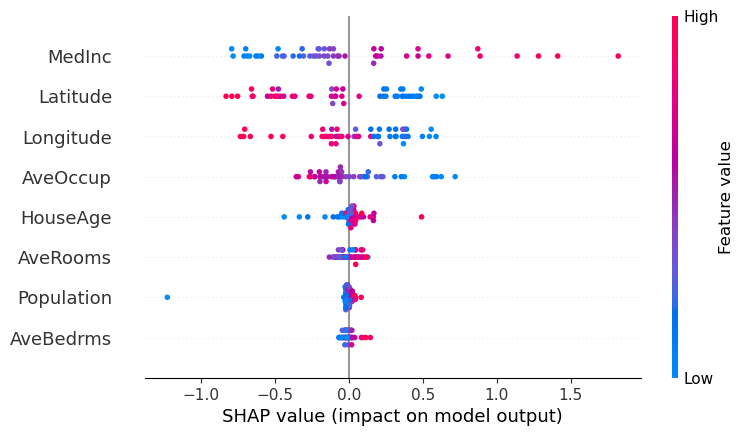

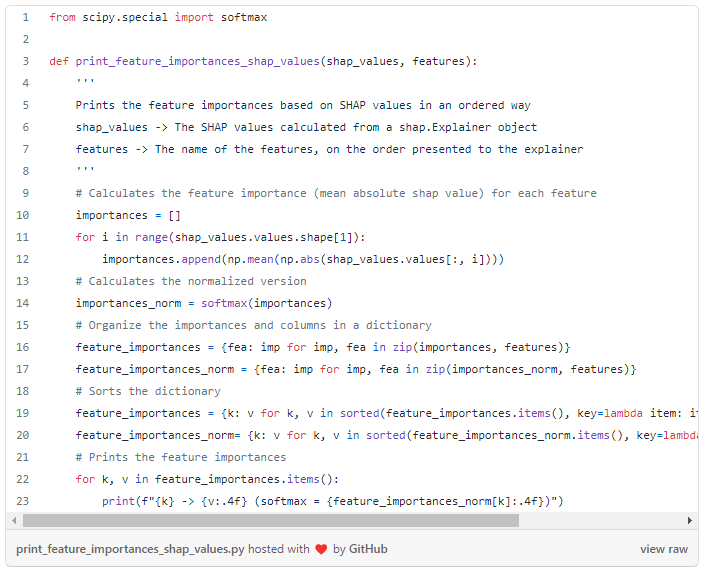

Using SHAP Values to Explain How Your Machine Learning Model Works, by Vinícius Trevisan

4.5 (479) · $ 13.99 · In stock

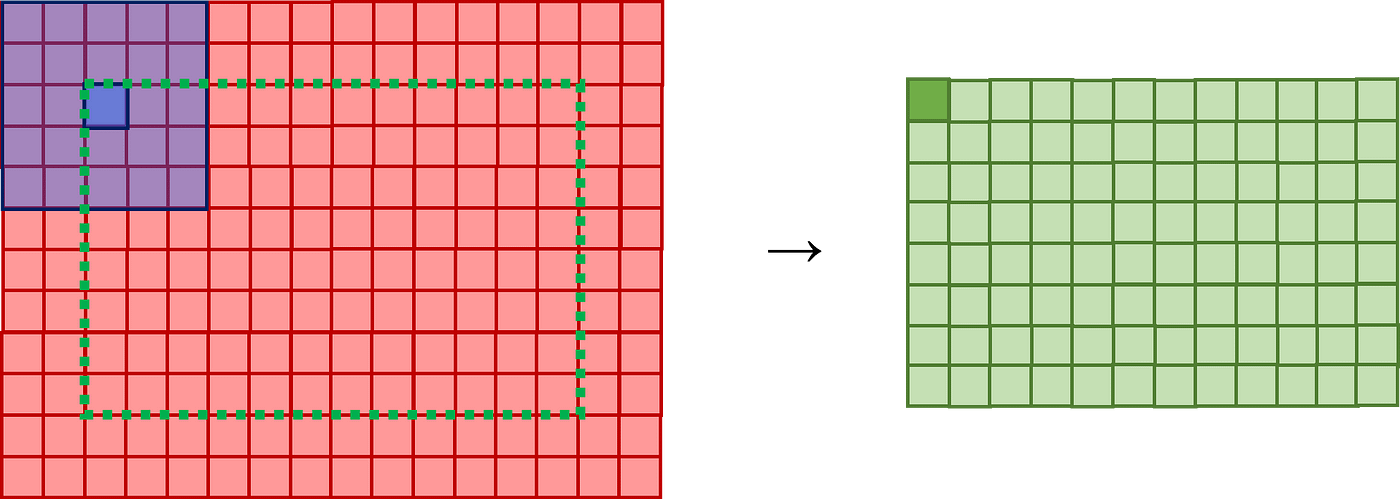

Understanding Convolutional Neural Networks (CNNs), by Vinícius Trevisan

Using SHAP Values to Explain How Your Machine Learning Model Works — Vinicius Trevisan

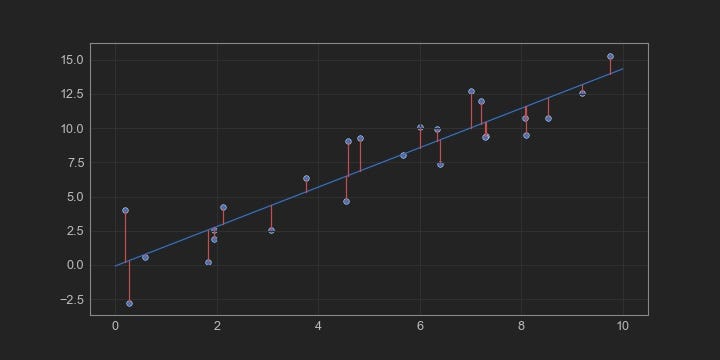

Comparing Robustness of MAE, MSE and RMSE, by Vinícius Trevisan

Introduction to Explainable AI (Explainable Artificial Intelligence or XAI) - 10 Senses

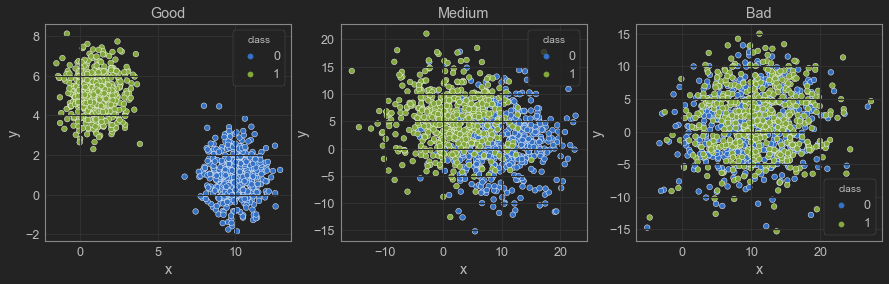

Is your ML model stable? Checking model stability and population drift with PSI and CSI, by Vinícius Trevisan

Medium

Evaluating classification models with Kolmogorov-Smirnov (KS) test, by Vinícius Trevisan

Introduction to Explainable AI (Explainable Artificial Intelligence or XAI) - 10 Senses

Target-encoding Categorical Variables, by Vinícius Trevisan

List: explainability, Curated by Galkampel

The most insightful stories about Explainable Ai - Medium

Vinícius Trevisan – Medium

Is it correct to put the test data in the to produce the shapley values? I believe we should use the training data as we are explaining the model, which was configured

Introduction to Explainable AI (Explainable Artificial Intelligence or XAI) - 10 Senses

)